Hardware: Applied to all Forecr Products

OS: Applied to all Jetpack versions

Camera Model: Boson 320, 92° (HFOV) 2,3 mm

In this post, we are going to use FLIR Boson thermal camera with Jetson Modules. By accomplishing it, gstreamer will be used. The usage of the camera module is same with all the Jetson modules so does not matter which Jetson Module you are using.

As default, gstreamer packages are installed by Jetpack software so there is no need to install these packages from scratch.

Our FLIR Boson Camera module full model is " Boson 320, 92° (HFOV) 2,3 mm ". It comes with two different resolutions as 640x512 and 320x256 pixels. You should plug the camera module with USB Type C cable into the Jetson device.

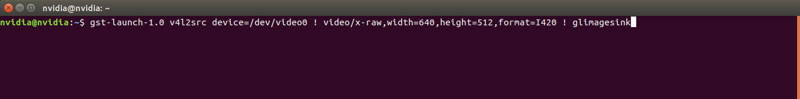

The first pipeline should be like below.

gst-launch-1.0 v4l2src device=/dev/video0 ! video/x-raw,width=640,height=512,format=I420 ! glimagesink

The properties which are exclamation points "!" are known as capabilities. The short form it "caps". These caps are used basically identify what type of data flows between elements.

For this pipeline "v4l2src" is selected as source plugin. "v4l2src" reads frames from a Video4Linux2 device. When you plugged the camera in to the device, there should be "/video*" under the "/dev" file. The number of video file depends on the how many USB devices plugged in to your Jetson device. The format is set to "I420" and the sink plugin is set to "glimagesink".

After running the gstreamer pipeline, the footages should be seen like below.

Another pipeline we are using can be seen below.

Use the commands below according to your sensor using.

For 320 x 256 resolution sensor

gst-launch-1.0 v4l2src device=/dev/video0 ! video/x-raw,width=320,height=256,format=GRAY16_LE ! videoconvert ! glimagesink

For 640 x 512 resolution sensor

gst-launch-1.0 v4l2src device=/dev/video0 ! video/x-raw,width=640,height=512,format=GRAY16_LE ! videoconvert ! glimagesink

The sensor returns the image data as 14.2 bits wide (referred to as 16bits) but most of the commercial displays are only capable of imaging 8 bits of data. In other words, the video is displayed on a 0-255 scale rather than the full 0.00-16383.75 resolution of the sensor.

This pipeline enables you to get raw data and customize the streaming data.

Firstly, you must download the Boson Software Development Kit (SDK). Please click here for downloading SDK. After downloading it, extract the compressed file. Rename it as "BosonSDK" and copy the directory somewhere you want to work.

You should install the "python3-pip" package and "pyserial" module.

unzip BosonSDK_rev206.zip

mv SDK_USER_PERMISSIONS BosonSDK

cp -r BosonSDK /home/nvidia

cd

sudo apt-get install python3-pip

pip3 install pyserial

Change the directory and start the compiling process like below.

cd ./BosonSDK/FSLP_Files

make all

If you get the "-m64 " error , delete the "-m64" parameters from "Makefile" and then start ‘make all’ command again.

After compilation process completed, you should download the zip file from here.

Extract the zip and change the "OWNER" & "GROUP" variables for your Jetson module in the "99-flir.rules" file.

Copy the "99-flir.rules" file under the "/etc/udev/rules.d" directory. This enables us to authorize all the users are capable to use serial communication. After copying the file, reboot the system.

sudo cp 99-flir.rules /etc/udev/rules.d/

sudo reboot

Start the python3 command line prompt and run the below commands, respectively.

You should pay attention "os.chdir" and "manualport" paths. "os.chdir" is the location of SDK folder and "manualport" is camera manual device path. It is better to check them before run the commands.

Hint: If you want to use the python script without sudo privileges, please give permission on your current user. To do this, you need to type the next command block and reboot your Jetson (or log out and log in again):

sudo adduser $USER dialout

sudo python3

>>> import os

>>> os.chdir("/home/username")

>>> from BosonSDK import *

>>> myport = pyClient.Initialize(manualport="/dev/ttyACM0") # or manualport="COM7" on Windows

>>> pyClient.bosonRunFFC()

>>> result, serialnumber = pyClient.bosonGetCameraSN()

>>> pyClient.Close(myport)

>>> result

>>> print(result)

>>> print(serialnumber)

Thank you for reading our blog post.